The massive growth in modern data centers and the new requirements of AI workloads are an occasion to rethink data center architecture. Traditionally, data center operations were organized into several tiers. Today, cutting-edge infrastructure often employs one of two contrasting strategies. Converged infrastructure brings tiers together within the same devices to work in lockstep, while composable infrastructure separates out (“disaggregates”) tiers, putting them on equal footing and allowing for more flexibility.

The disaggregated approach allows data center managers to independently scale compute and storage. While the flexibility required by AI is a central motivation, the appeal is broader than that. In this article, we’ll take a look at some pros and cons of composable infrastructure, with particular attention towards the role HDDs play in these disaggregated arrangements.

What is Composable Infrastructure

Before we arrive at the gritty details, let’s straighten out some terminology. Tech publications use the words “disaggregated infrastructure” and “composable infrastructure” in mutually inconsistent ways, sometimes also using the hybrid “composable disaggregated infrastructure” (CDI).

For the purposes of this article, we will use “disaggregation” in a general sense, as an adjective. Think of disaggregation as a direction in which data center infrastructure can move, where resources like storage and compute are broken down into discrete components which can be more easily scaled and rearranged. We will reserve the term “composable infrastructure” for cutting-edge varieties of disaggregated storage with high-speed connections, usually NVMe-oF. Note that NVMe is the protocol (compare to SAS or SATA), whereas NVMe-oF adds a messaging layer that allows it to operate across fabrics.

It’s helpful to contrast composable infrastructure with converged infrastructure. But first, for a point of comparison, let’s examine traditional data center setups.

Traditional Arrangements

Traditionally, data centers used what is known as the three-tier application architecture. To oversimplify a bit, you can think of tiers as separate functions run on separate infrastructure:

- Presentation tier: The user interface and communication.

- Application tier: This is where the bulk of data is processed.

- Data tier: This is where data is stored and managed.

The bulk of compute takes place in the application tier, and the bulk of storage takes place in the data tier. Of course, things are a bit more nuanced in practice: you need some short-term storage in the application tier, and the storage devices in the data tier need a certain amount of compute to keep things organized.

Networking is the glue which holds these tiers together. Traditionally, the tiers are connected by Network-Attached Storage (NAS) or Storage Area Network (SAN). Crucially, the presentation and data tiers are not directly connected in traditional architectures: the application tier acts as an intermediary.

Two Roads Diverged: Composable and Converged Infrastructure

When it comes to data centers, speed matters. Given multiple tiers connected by networking equipment, you can speed things up by getting the tiers closer together, or by investing in faster connections between tiers.

In converged infrastructure, storage, servers, and networking are all merged together in tightly packaged solutions. By bringing different functions together in this way, they cease to be tiers, since they no longer run on separate physical infrastructure. So-called hyperconverged storage takes things further by letting you handle converged infrastructure with software-defined storage.

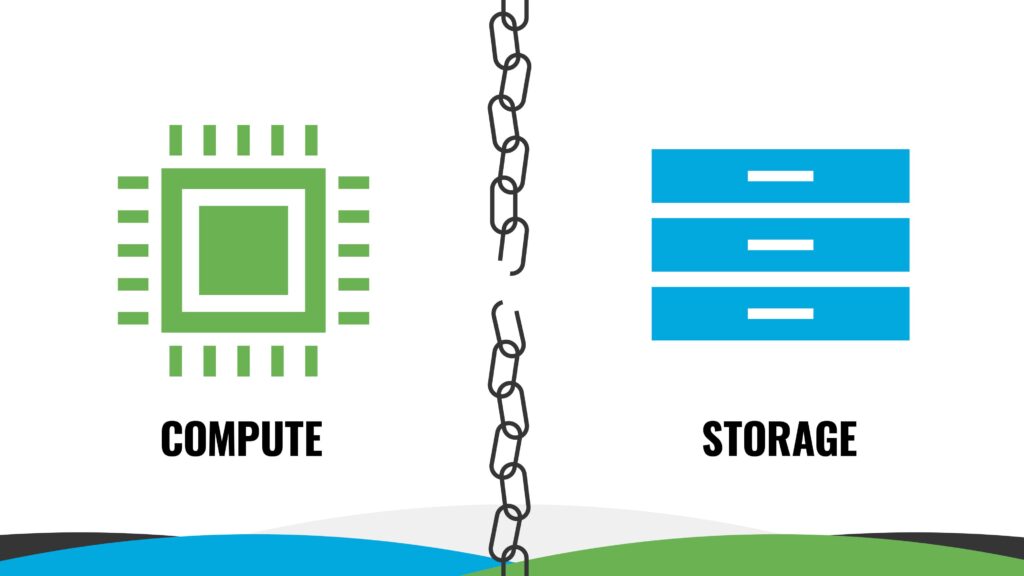

By contrast, disaggregated architectures keep functions separate. In a sense, the traditional three-tier architecture is already disaggregated, since different functions take place in different tiers. But disaggregation is a matter of degree. In composable infrastructure, tiers are no longer as tightly “coupled”: you can rearrange the connections between CPUs and storage devices far more easily.

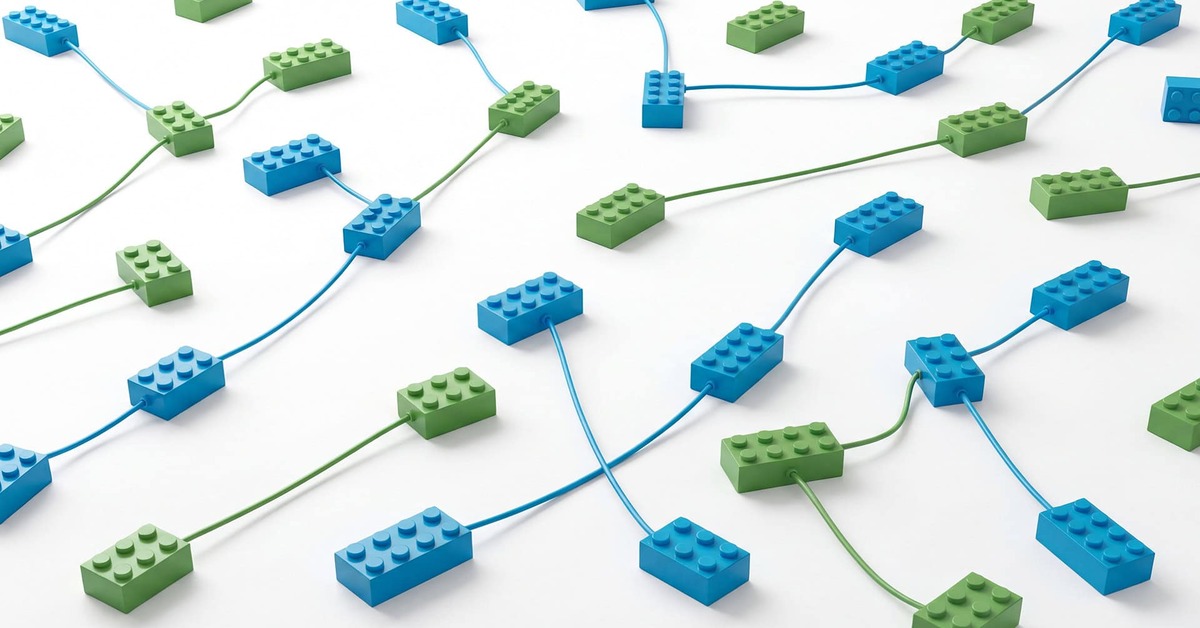

To put it simply, composable infrastructure spreads out each function, such as compute and storage, into multiple independent devices, which are then joined with lightning-fast NVMe-oF connections. An Application Programming Interface (API) then lets you abstract compute, storage, and networking. This gives data center managers more fine-grained control than either traditional three-tier architecture or converged infrastructure.

Related Reading

NVMe is a data transfer protocol which allows increased throughput and transfer speeds. Though the protocol was originally for flash, NVMe HDD may prove to be a crucial step towards effective and efficient storage solutions.

Benefits of Disaggregated Storage

Disaggregated architectures have a number of qualities that make them an attractive option for many data center use cases.

Independent Scaling

What all disaggregated approaches have in common is that you can scale compute and storage independently. Again, this is most easily seen by contrast with converged infrastructure.

Suppose you have converged or hyperconverged infrastructure, such as custom chassis that bundle together compute, storage, and networking. Now, suppose you want more storage space. Since everything comes packaged together, you’ll need to buy more compute along with your storage if you want to scale.

If you want to get more compute, you have the same problem: you’ll need to get extra storage you might not use. This leads to underutilization and overprovisioning, which costs money. Composable infrastructure lets you avoid this issue: you can buy what you need, when you need it.

In traditional arrangements, you often need to “scale up”, adding more compute or capacity to a server or appliance. This means that your ability to scale is limited by the server in question. Like composable infrastructure, converged infrastructure lets you “scale-out”, adding new nodes rather than supplementing an existing node. However, the scale-out abilities of converged infrastructure aren’t fine-grained: you need to buy a whole new unit of packaged compute and storage in order to expand.

Flexibility

The granular nature of composable infrastructure leads to a great deal of flexibility. You can more easily modify your data center to handle new types of workloads, since you no longer need specialized, siloed infrastructure. And whether the workload is new or old, composable infrastructure can help you deploy resources faster.

Flexibility is part of what makes composable infrastructure so attractive for AI-focused data centers. AI is changing rapidly, so it’s hard to anticipate just how much compute or storage will be needed, or what workload requirements your data center will require. Composable infrastructure allows data centers to be nimble enough to adapt to whatever sea change hits AI next.

Composable infrastructure lets you be more opportunistic with procurement, because you can add more devices, and different sorts of devices, as needed rather than filling empty slots in a pre-built chassis. This is particularly handy in years when storage supply chains are constrained, and IT asset managers need to get creative in sourcing storage devices.

Related Reading

The flexibility of composable infrastructure allows IT asset managers to mix and match storage to minimize costs. But when does HDD offer TCO savings compared to SSD?

Challenges of Disaggregated Storage

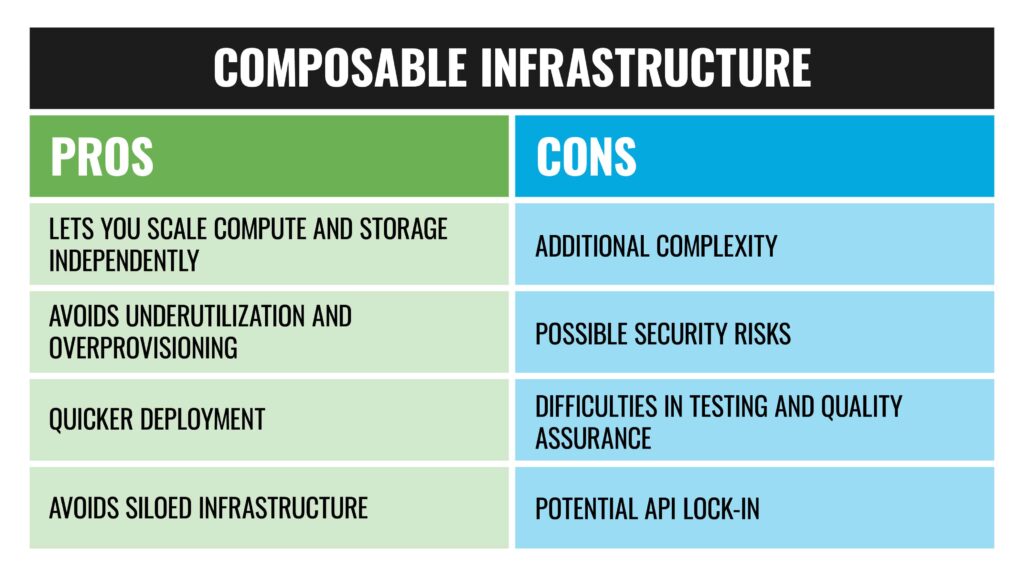

While excellent for the right workloads, composable infrastructure isn’t without its drawbacks. And these drawbacks can mostly be traced to one source: with flexibility comes additional complexity. Without resources arranged into tiers or custom chassis, infrastructure can be more complicated to keep track of and control.

This complexity can mean a need for more, or more specialized, data center staff. It can also result in security issues: if you don’t have a handle on where data is and when, you have an increased chance of data exposure. Testing and quality assurance can also be more complicated for composable infrastructure.

To mitigate these potential issues with composable infrastructure, you need careful API management to keep devices communicating and working in concert. However, APIs come with a learning curve. This in turn can be remedied with out-of-the-box solutions, but this increases costs and raises the specter of vendor lock-in.

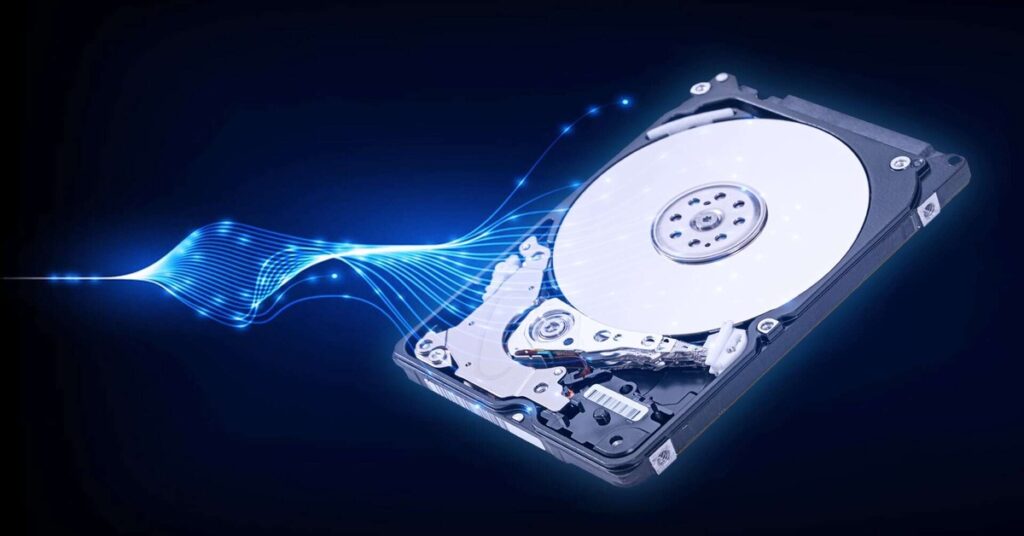

HDD and Disaggregated Storage

Hard drives remain the workhorse of the modern data center. Sky-high demand and a rising wave of data generated means HDD will maintain this status for the foreseeable future. Given the importance of HDD, how does spinning media fit into data centers based on disaggregated architectures?

Hybrid Storage

One of the biggest perks of disaggregated storage is that its inherent flexibility makes it easier to mix and match different sorts of storage media. This makes it the ideal architecture for hybrid data centers, those which use both the massive capacity of HDDs and the high-performance SSDs. These hybrid architectures are in turn valuable, because they allow organizations to use the most cost-effective storage devices for the task at hand.

AI data pipelines are illustrative in this regard. These pipelines have distinct stages, each with separate storage needs. When data is prepared and ingested, high-cap SSD fits the bill, while efficient training and inference also needs high-performance compute SSDs. But just as important are the HDDs that bookend the whole process, storing data cheaply at scale both before it’s ingested and after it’s generated. Hybrid data centers make space for all of the above to work together efficiently.

Scaling Cheaply

Incidentally, Seagate and Western Digital, two of the largest hard drive OEMs, have both taken a keen interest in disaggregated architectures. And no wonder: what they offer is mass capacity at a low cost-per-TB. And, as discussed above, the ease of scaling out is one of the primary virtues of composable infrastructure. For example, in a disaggregated setting, you can arrange high-cap hard drives in a separate scalable pool which can then be expanded as needed.

A Bright Future

What keeps composable infrastructure fast and efficient are speedy interconnects like NVMe-oF, a protocol which enables increased throughput and reduced latency. Unfortunately, HDDs are not, generally speaking, NVMe-native. But what if they were?

Seagate has worked on answering this very question. The firm demonstrated the first NVMe HDD back in 2021. That same year, NVMe 2.0 included HDD support. The Open Compute Project (OCP) released specs for NVMe HDD the following year. Analyst Tom Coughlin believes NVMe HDD will dominate enterprise and data center HDD by 2028.

What’s more, in developing NVMe HDDs, Seagate clearly has the drawbacks of converged infrastructure in mind. In 2025, the firm showcased a hybrid storage architecture which they billed as “particularly beneficial for enterprises needing flexible, composable storage solutions for AI workflows.” The message is clear: composable infrastructure paves the way for NVMe HDDs to be full-fledged members of a high-throughput AI infrastructure.

Related Reading

Disaggregated storage makes it easier to have the best of both worlds: SSD for low-latency operations and HDD as a place for data to rest pre- and post-processing.

Divide and Conquer

By letting you scale compute and storage independently, disaggregated approaches to data center infrastructure give modern data centers the flexibility the current era calls for. It allows more fine-grained control of data center resources, helps cut down on overprovisioning, and allows for flexible deployments in the face of new workloads.

Composable infrastructure is good for AI, but not just for AI. Flexibility has other upsides, such as more leeway when it comes to procurement, faster scaling, and reducing costs by avoiding underutilization. In these disaggregated architectures, HDDs will be right where it’s always been: doing the heavy lifting, storing data cheaply at scale.

Get in touch to see how Horizon Technology can help you procure affordable storage which meets the needs of your data center.