The rapid rise of AI is straining conventional storage setups, pushing data centers to balance several critical but conflicting demands. These include the needs for massive capacity, high speed (low-latency and high throughput), and affordability. While much depends on the task at hand, it’s generally acknowledged that SSDs offer speed at a much greater acquisition cost, while HDDs are budget-friendly but slower.

Hybrid data centers are solutions which intelligently combine SSD performance with HDD affordability. Such setups are a practical in-between for handling the multi-stage data pipelines found in AI workflows.

This article explores how hybrid setups help data centers tackle AI workload challenges. Many hands make light work, with OEMs and storage platform companies alike crafting solutions that enable HDDs to play a crucial role in AI data pipelines. What emerges as a key theme is the importance of disaggregated setups that help keep GPU clusters saturated without sacrificing the cost benefits of HDDs.

Niche Roles in the Storage Mix

Much ink has been spilled over whether QLC SSDs will begin to replace nearline HDDs over the next decade. However, pitting HDDs against SSDs is like comparing apples to oranges—at least when these drives are being used in ways that play to their strengths.

HDDs stand out for their high-capacity at a low cost-per-TB. This makes them ideal for storing bulk data where speed isn’t the primary concern, such as holding raw training data or post-inference content where low-latency access isn’t needed.

SSDs, on the other hand, offer the low latency, high IOPS, and high throughput required for quick access and active processing. They excel at preparing data to be ingested into AI workflows, but come at higher cost-per-TB.

Hybrid storage architectures attempt to blend the best of both worlds. They tap SSDs for high-speed cache (temporary holding) and for tasks like training checkpoints, optimizing speed across AI pipelines. The whole process is bookended with HDDs, which are used for storing data that’s accessed less frequently, which helps trim overall storage costs.

A potential hurdle for hybrid architectures is the supply vulnerability of high-cap HDDs. Surging demand due to the AI boom has pushed lead times of enterprise HDDs from just a few weeks to beyond 52 weeks. But it has since become clear that this is part of a broader problem, with NAND suffering similar shortages.

Related Reading

Comparisons between all-flash and hybrid solutions are prone to oversimplification. When it comes to weighing SSD and HDD TCO, the devil is in the details.

Western Digital’s AI Data Cycle Framework

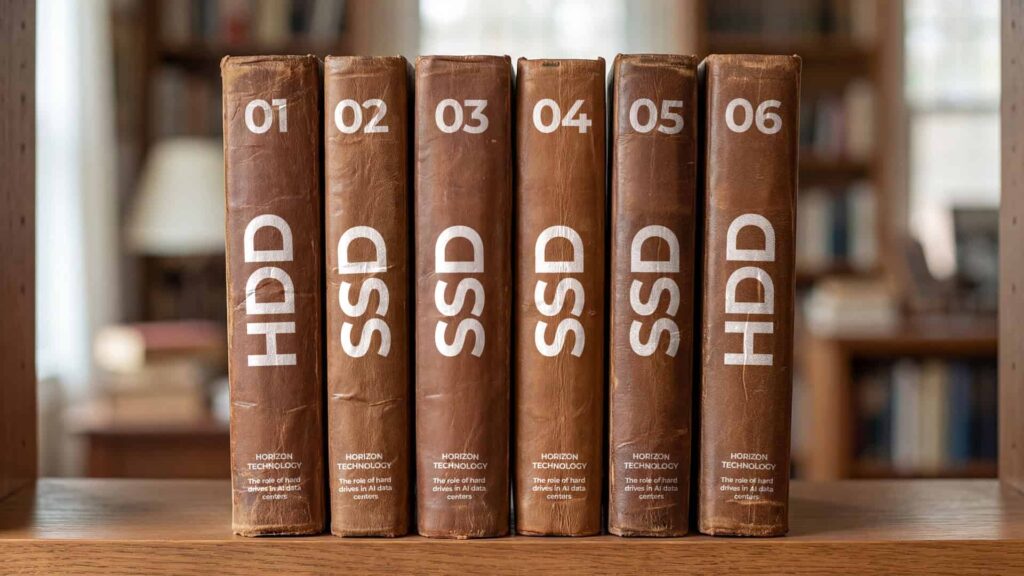

We can get more fine-grained when it comes to the data pipeline. Western Digital has developed a simple six-stage AI data cycle framework which outlines the movement of data from start to finish. It pairs an appropriate storage type with each stage, creating a balanced mix of SSDs and HDDs. As a major HDD OEM, WD unsurprisingly emphasizes the suitability of its own devices. Still, it’s a useful frame for thinking about hybrid data centers.

The cycle begins with ‘Archives of raw data content’, which uses high-cap HDDs. Next comes ‘Data prep and ingest’, which utilizes high-cap SSDs. The core processing Stages 3, 4 and 5 – ‘AI model training’, ‘Interface and prompting’, and ‘AI inference engine’ – rely on a combination of high-cap SSDs and high-performance compute SSDs. The cycle concludes with the 6th stage: ‘New content generation’, with the output again stored on high-cap HDDs.

Related Reading

Whatever your storage mix, careful planning can help you lower TCO. From strategic procurement to careful use of tiering, here are ways to get more for less when it comes to HDD.

How to Lower Total Cost of Ownership (TCO) for Hard Drive Storage

Hybrid NVMe: Seagate’s Path to AI Storage

The Western Digital framework gives a general sense of how drives fit into the AI data cycle. What it doesn’t address, however, is the glue that connects each step in the cycle to the next: the hardware and protocols necessary to get data where it needs to be with as little complication as possible.

NVMe provides just such a glue. While some hybrid solutions involve putting SSDs and HDDs side-by-side in hybrid arrays, Seagate is betting that NVMe-native HDDs will allow spinning media a role in disaggregated setups. These setups are increasingly favored for AI tasks due to their flexibility and scalability.

Non-Volatile Memory Express (NVMe) is an interface protocol that links the host with storage devices. Originally developed for flash, its high throughput and transfer speeds could hold the key to unlocking high-speed data transfer in a hybrid setup.

Seagate is now working on NVMe-native HDDs, opening the door for more flexible hybrid architectures. Currently, special adapters are needed to hook NVMe SSDs to HDDs, as the latter usually uses SATA or the older SAS. These bridges create CPU bottlenecks, a problem which NVMe HDDs handily sidestep. By combining high-capacity HDDs with SSDs in a single high-speed interface, this approach enables better GPU-to-storage access via DPUs (data processing units). By removing extra hurdles, NVMe-native HDDs enable a more versatile and efficient hybrid environment.

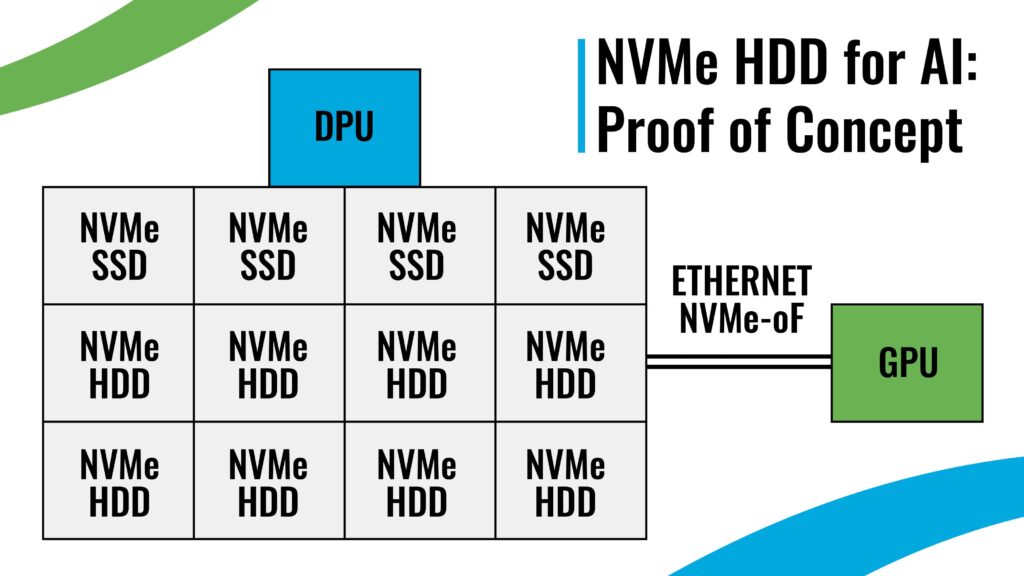

Seagate’s Proof of Concept

Putting this to the test, Seagate demonstrated a proof of concept at the Nvidia 2025 GTC by combining four 4TB NVMe SSDs with eight 32TB HDDs. The hybrid combination matched the performance of four 64TB SSDs but cost only one-sixth as much. Running Nvidia’s BlueField DPU and AIStore software, the hybrid setup shows the possibility of scaling AI pipelines affordably, without relying on an all-flash setup.

Analyst Tom Coughlin predicts that NVMe HDDs will start rolling out by the end of 2026 and could lead the HDD enterprise storage market by 2028. This evolution aligns with the impending transition of CPU-centric traditional data centers into GPU-centric AI factories. As vendors race to upgrade, Seagate’s NVMe HDD is positioned to support this shift, allowing disaggregated storage systems that are engineered for AI from the ground-up.

Related Reading

Just crazy enough to work? By avoiding the need for bridges, adapters, and controllers, NVMe-native HDDs are designed to play nice with cutting-edge SSDs.

Putting It All Together

A constant theme in comparisons between all-flash and hybrid setups is how much rides on how everything is arranged. For optimal performance, you need more than just the right devices, in the right places, with the right connections. The overall architecture should also be fashioned with an eye towards the particular needs of the task at hand, such as AI training.

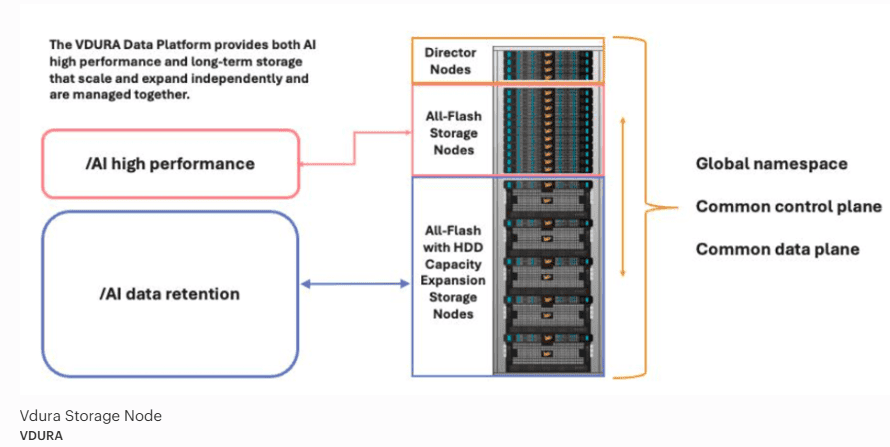

Many companies, such as HPE, NetApp, and Dell offer hybrid arrays. However, we’ll use the VDURA data platform as a case study, since it’s designed with high-performance computing and AI/ML storage in mind. It also has two other features that tie in with our previous discussion: it is an NVMe-first architecture, and it is designed to allow the use of commodity drives, including hard drives.

In its whitepaper, VDURA’s latest design specifications pair QLC NAND SSD (Phison Pascari 128 TB) with high-cap HDD (30+ TB) to address AI and HPC workload needs. This smart pairing allows for flexibility in the SSD to HDD ratio within a setup, bringing down TCO without compromising performance. The company suggests that an 80% HDD to 20% SSD blend can meet the needs for most operations.

VDURA’s Defense of Hybrid Storage

Crucially, VDURA claims that its latest data platform delivers fast performance at 60% less TCO than all-flash competitors. It achieves this through smart data management techniques, such as “V-ScaleFlow”, which helps smoothly move data between HDDs and QLC SSDs. The firm also claims that by enabling the use of hyperscale HDDs, they halve the price and power consumption per PB compared to flash-only solutions.

VDURA has taken an active part in debates between proponents of all-flash and hybrid arrangements. For VDURA’s VP of Product Management, Chris Girard, “$/TB reality still favors capacity HDD, by a wide margin.” This philosophy is summed up in their motto: “… buy flash for performance, disk for capacity, in one system”.

Related Reading

To get the most out of storage, it helps to plan. Careful attention to the frequency of hardware refreshes is key to minimizing costs for your storage devices.

A Possible Future for HDD

When it comes to the storage mix in five or ten years, an educated guess is usually the best one can do. However, advances by Seagate and VDURA do suggest one possible future of HDDs in the AI data center.

Spinning media could increasingly figure in disaggregated, NVMe-connected setups designed to usher data smoothly between mixed media. Even though HDD couldn’t take full advantage of the throughput NVMe enables, NVMe-native HDD could avoid CPU bottlenecks due to connectors and bridges.

Meanwhile, architectures like VDURA would allow disaggregated setups to save by utilizing hyperscale HDDs where appropriate. Bringing commodity hardware into the mix also lets IT asset managers get creative with storage procurement. This is useful in years when, like now, the supply for storage devices is particularly tight.

Related Reading

In a market where global supply chains are volatile and counterfeit activity is rising, factory recertified drives are sourced through authorized channels—giving you a known, traceable product.

Navigating Procurement Strategies for Factory Recertified Drives

Second Thoughts? TCO and Compression

Despite its balance, the hybrid approach still divides opinions. Even with SSD’s high upfront price over HDD, the discussion over TCO continues. For example, analyst Chris Mellor, leaning on device stats from Supermicro and a study by Solidigm, argues that flash wins in the long run for all types of data, including raw and new content.

Mellor’s argument is that SSD can utilize space better by squeezing in more bytes and consuming less power. The Solidigm study claimed that a QLC SSD setup could run at 79.5% higher power efficiency compared to hybrids, while enabling more AI infrastructure. Reasoning along these lines leads SSD enthusiasts to conclude that the TCO scale favors all-flash, despite the higher initial cost.

However, recall that compression also plays a role in TCO, though its importance depends on the workload. Many all-flash setups are paired with software which allows data to be compressed significantly. This isn’t that significant when it comes to storing something like video footage, where data usually comes pre-compressed. However, training data typically does not come precompressed, meaning that the time and compute compressing data and storing it on a space-efficient SSD must be factored into costs.

Obviously, this doesn’t suggest that compression, or SSDs for that matter, are unnecessary. The real moral is that with TCO, everything depends on the specifics. Compression is just one part of data ingestion prep, and usually the final step. You need somewhere to store all that data before it goes through the preparation process, and HDDs are a natural fit here. The decompression process can also add to lag in the overall training process.

Related Reading

While largely displaced from consumer electronics, nearline HDD remains the backbone of the modern data center. It is likely to remain so for some time, carving out a longstanding niche in the storage mix.

The Best of Both Worlds

Will NVMe-native HDD and disaggregated hybrid storage architectures be a match made in heaven, or prove more cumbersome than their all-flash alternatives? Only time will tell. However, advances by Seagate, VDURA, and others demonstrate the possibility of balancing performance with low-cost, large-scale expansion.

Deeper understanding of AI operations, new techniques for intelligent distribution of data between SSDs and HDDs, and the simplification of SSD-HDD interaction may prove key for unlocking a viable, wallet-friendly storage infrastructure for demanding AI pipelines. As questions around speed, power use and hardware limits grow into make-or-break factors for future AI advancement, hybrid storage architectures seem to strike the perfect balance between price and performance.

Contact Horizon Technology for help procuring affordable storage which meets the needs of your data center.